Hello there, I am Zhipeng!

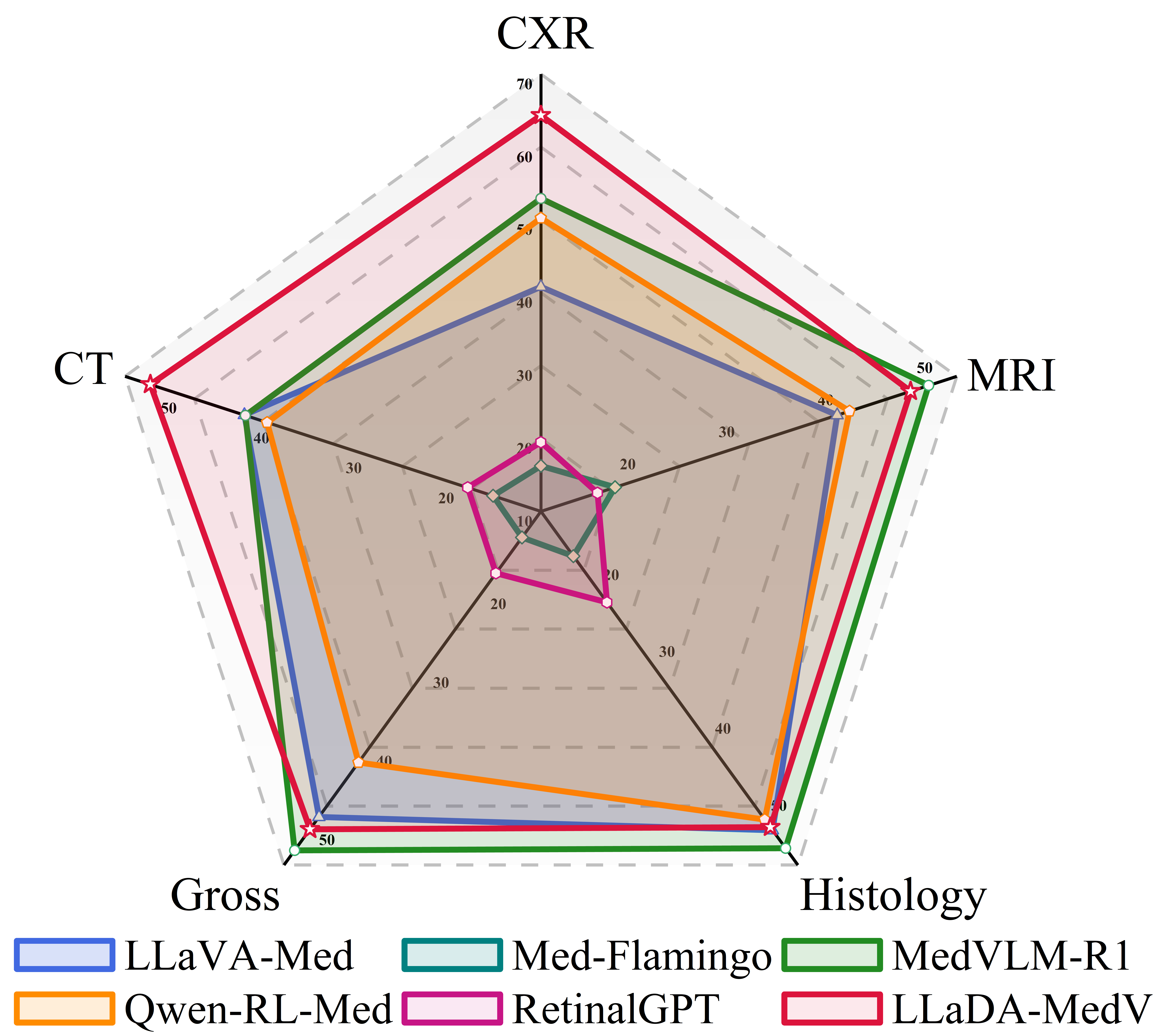

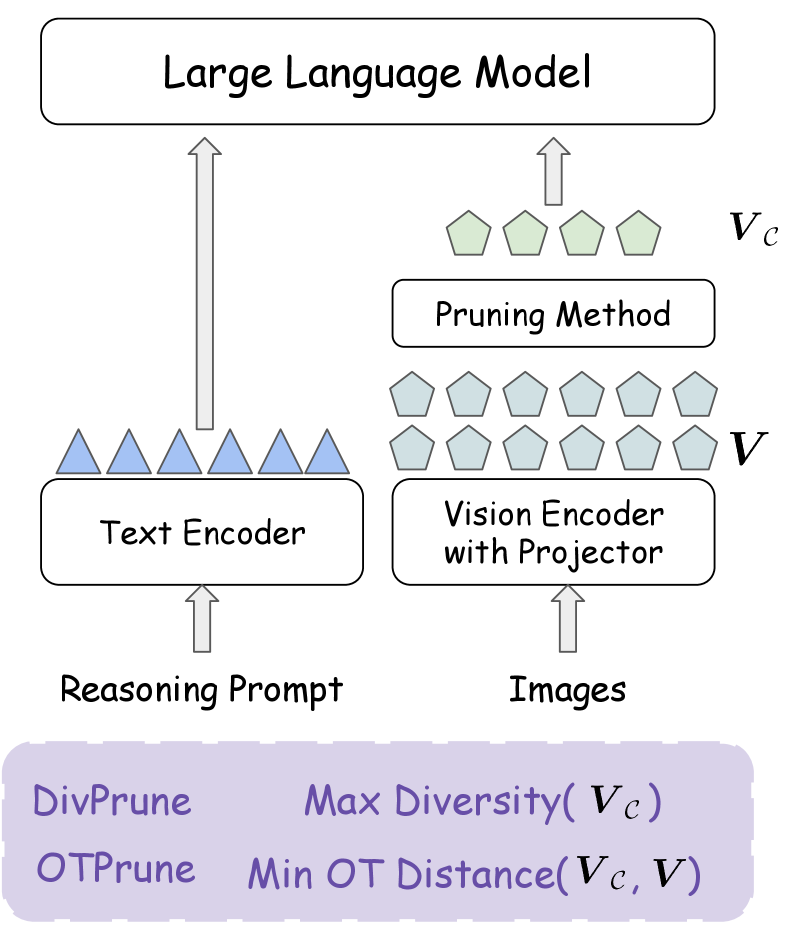

I am a seasoned scientist & engineer working in the field of Machine Learning Systems with a primary focus on the optimization of large-scale distributed training and inference systems for foundation models and large language models (LLMs). Distinct from many other ML Systems researchers, my work also extends into core modeling research. My core modeling research mainly focuses on efficient AI—particularly LLM compression, knowledge distillation, LLM post-training methodologies, Agentic Reinforcement Learning and Vision-Language Models (VLM). Some of my research findings are published in top venues including EMNLP, CVPR, MLSys, ECCV, ICCV, WACV, ICML and NeurIPS etc.

Some of the representative ML Systems that I was involved in the development phase including DeepSpeed, one of the most popular OSS LLM distributed training library (code maintainer and TSC Committer); fmchisel, the state-of-the-art Foundation Models Optimization research library (e.g. knowledge distillation,compression and quantization etc); and Liger Kernel (Kudos to Byron Hsu and Yun Dai et al for leading the work).

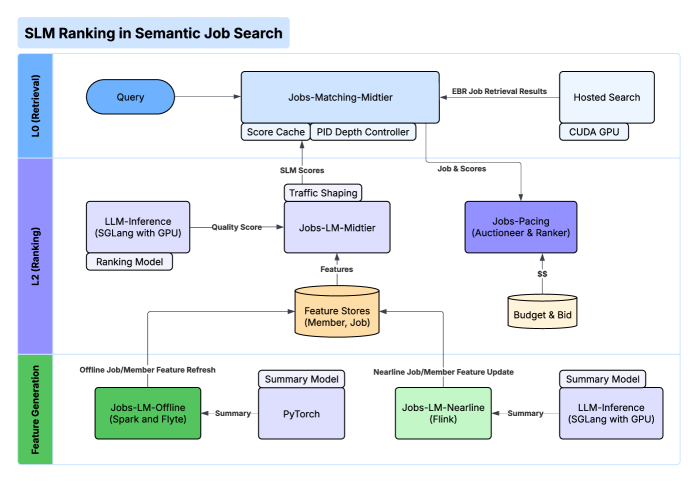

In the corporate world, I am Senior Manager/Senior Staff Research Scientist leading the Foundational AI algorithms organization at LinkedIn (subsidary of Microsoft). Previously I worked at AWS AI organization at Amazon, where I was leading the SageMaker Applied Science Team contributing to LLM Inference/Distributed Training and Evaluation Services. I was also the Tech Lead Manager/Staff Software Engineer at Google[X]/Google Research, where I was building up the machine learning team for the Moonshot project Chorus and engaged in AIDA project building coding agent using LLM (now part of Gemini). I was also involved in PaLM model development work across Alphabet. Before that I was Staff Research Scientist at Apple, where I lead the development of ML Algorithms for Sleep Apnea Detection on Apple Watch.

News

- We won Best Paper Award in Visual Art, Generative AI, and the Legal/Ethical Dilemma Workshop @ WACV 2026 for our paper titled “EZBlender: Efficient 3D Editing with Plan-and-React Agent”!

Talks and Tutorials

Building Efficient Large-Scale Model Systems with DeepSpeed: From Open-Source Foundations to Emerging Research

Olatunji Ruwase, Minjia Zhang, Masahiro Tanaka, Zhipeng Wang, ASPLOS 2026 TutorialRay x DeepSpeed Meetup: AI at Scale

Olatunji Ruwase, Masahiro Tanaka, Vinay Sridhar and Zhipeng Wang, slides

Selected Recent Publications

Open Source Contributions

I am the TSC Committer and code maintainer for DeepSpeed project, one of the most popular OSS libraries for LLM training. I also help maintain the Liger Kernel project, feel free to raise issues and contribute PRs.